The second annual CyFyAfrica 2019, The Conference on Technology, Innovation, and Society [1] took place in Tangier, Morocco, 7 – 9 June 2019. It was a vibrant, diverse and dynamic gathering where various policy makers, UN delegates, ministers, governments, diplomats, media, tech corporations, and academics from over 65 nations, mostly African and Asian countries, attended. The conference unapologetically stated that its central aim is to bring forth the continent’s voices to the table in the global discourse. The president’s opening message emphasised that Africa’s youth need to be put at the front and centre stages of African concerns as the continent increasingly relies on technology for its social, educational, health, economical and financial issues. At the heart of the conference was the need to provide a platform to the voice of young people across the continent. And this was rightly so. It needs no argument that Africans across the continent need to play a central role in determining crucial technological questions and answers of not only for their continent but also far beyond.

In the race to make the continent teched-up, there are numerous cautionary tales that the continent needs to learn from. Otherwise we run the risk of repeating them and the cost of doing so is too high. To that effect, this piece outlines three major lessons that those involved in designing, implementing, importing, regulating, and communicating technology need to be aware of.

The continent stands to benefit from various technological and Artificial Intelligence (AI) developments. Ethiopian farmers, for example, can benefit from crowd sourced data to forecast and yield better crops. The use of data can help improve services within the health care and education sector. The country’s huge gender inequalities which plague every social, political, economical sphere can be brought to the fore through data. Data that exposes gender disparities in these key positions, for example, renders crucial the need to change societal structures in order to allow women to serve in key positions. Such data also brings general awareness of inequalities, which is central for progressive change.

Having said that, this is not what I want to discuss here. There already exist countless die-hard technology worshipers, some only too happy to blindly adopt anything “data-driven” and “AI” without a second thought of the possible unintended consequences, both within and outside the continent. Wherever the topic of technological innovation takes place, what we constantly find is advocates of technology and attempts to digitise every aspect of life, often at any cost.

In fact, if most of the views put forward by various ministers, tech developers, policy makers and academics at the CyFyAfrica 2019 conference are anything to go by, we have plenty of such tech evangelists – blindly accepting ethically suspect and dangerous practices and applications under the banner of “innovative”, “disruptive” and “game changing” with little, if any at all, criticism and scepticism. Therefore, given that we have enough tech-worshipers holding the technological future of the continent on their hands, it is important to point out the cautions that need to be taken and the lessons that need to be learned from other parts of the world, as the continent races forward in the technological race.

Just like Silicon Valley enterprise, the African equivalent of tech start-ups and “innovations” can be found at every possible sphere of life in any corner of the continent, from Addis Abeba to Nairobi to Abuja, to Cape Town. These innovations include in areas such as banking, finance, heath care, education, and even “AI for good” initiatives, both from companies and individuals within as well as outside the continent. Understandably, companies, individuals and initiatives want to solve society’s problems and data and AI seem to provide quick solutions. As a result the attempt to fix complex social problems with technology is ripe. And this is exactly where problems arise.

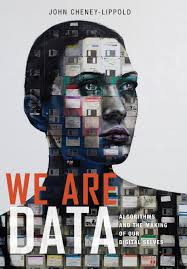

In the race of which start-up will build the next smart home system or state-of-the-art mobile banking application, we lose sight of the people behind each data point. The emphasis is on “data” as something that is up for grabs, something that uncontestedly belongs to tech-companies, governments, and the industry sector, completely erasing individual people behind each data point. This erasure of the person behind each data point makes it easy to “manipulate behaviour” or “nudge” users, often towards profitable outcomes for the companies. The rights of the individual, the long-term social impacts of AI systems and the unintended consequences on the most vulnerable are pushed aside, if they ever enter the discussion at all. Be it small start-ups or more established companies that design and implement AI tools, at the top of their agenda is the collection of more data and efficient AI systems and not the welfare of individual people or communities. Rather, whether explicitly laid out or not, the central point is to analyse, infer, and deduce “users” weakness and deficiencies and how that can be used to the benefit of commercial firms. Products, ads, and other commodities can then be pushed to individual “users” as if they exist as an object to be manipulated and nudged towards certain behaviours deemed “correct” or “good” by these companies and developers.

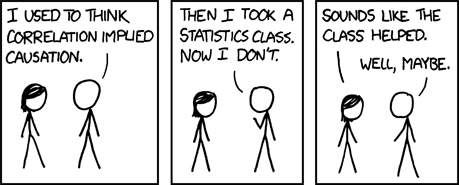

The result is AI systems that alter the social fabric, reinforce societal stereotypes and further disadvantage those already at the bottom of the social hierarchy while we allude to insisting these systems as politically neutral under the guise of “AI” and “data-driven”. UN delegates addressing the issue of online terrorism and counterterrorism measure and exclusively discussing Islamic terrorist groups, despite white supremacist terrorist groups carrying out more attacks than any other groups in recent years [2], illustrates an example where socially held stereotypes are reinforced and wielded in the AI tools that are being developed.

Although it is hardly ever made explicit, much of the ethical principles underlying AI rest firmly within utilitarian thinking. Even when knowledge of unfairness and discrimination of certain groups and individual as a result of algorithmic decision-making are brought to the fore, solutions that benefit the majority are sought. For instance, women have been systematically excluded from entering the tech industry [3], minorities forced into inhumane treatment [4], and systematic biases have been embedded in predictive policing systems [5], to mention but a few. However, although society’s most vulnerable are disproportionally impacted by the digitization of various services, proposed solutions to mitigate unfairness hardly consider such group as crucial piece of the solution.

Machine bias and unfairness is an issue that the rest of the tech world is grappling with. As technological solutions are increasingly devised and applied to social, economical and political issues, so are the problems that arise with the digitisation and automation of everyday life. The current attempts to develop “ethical AI” and “ethical guidelines” both within the Western tech industry and academic sphere illustrates awareness and attempt to mitigate these problems. The key global players in technology, Microsoft [6] and Google’s DeepMind [7] from the industry sector and Harvard and MIT [8], from the academic sphere are primary examples that illustrate the recognition of the possible catastrophic consequences of AI on society. As a result, ethics boards and curriculums on ethics and AI are being developed.

These approaches to develop, implement and teach responsible and ethical AI take multiple forms, perspectives, directions and emphasise various aspects. This multiplicity of views and perspectives is not a weakness but rather a desirable strength which is necessary for accommodating a healthy, context dependent remedy. Insisting on one single framework for various ethical, social and economical issues that arise in various contexts and cultures with the integration of AI, is not only unattainable but also advocating a one-size-fits-all style dictatorship and not a guideline.

Nonetheless, given the countless technology related disasters and cautionary tales that the global tech-community is waking up to, there are numerous crucial lessons that African developers, start-ups and policy makers can learn from. The African continent need not go through its own disastrous cautionary tales to discover the dark side of digitisation and technologization of every aspect of life.

AI is not magic and anything that makes it comes across as one needs to be disposed off

AI is a buzz word that gets thrown around so carelessly, it has increasingly become vacuous. What AI refers to is notoriously contested and the term is impossible to define conclusively – and it will remain that way due to the various ways various disciplines define and use it. Artificial intelligence can refer to anything from highly overhyped and deceitful robots [9], to Facebook’s machine learning algorithms that dictate what you see on your News Feed, to your “smart” fridge and everything in between. “Smart”, like AI has increasingly come to mean devices that are connected to other devices and servers with little to no attention being paid to how such hypoconnectivity at the same time creates surveillance systems that deprives individuals of their privacy.

Over-hyped and exaggerated representation of the current state of the field poses a major challenge. Both researchers within the field and the media contribute to this over-hype. The public is often made to believe that we have reached AGI (Artificial General Intelligence) or that we are at risk of killer robots [10] taking over the world, or that Facebook’s algorithms have created their own language forcing Facebook to shut down its project [11], when none of this is in fact correct. The robot known as Sophia is another example of AI over-hype and misrepresentation of AI, one that shows the disastrous consequences of the lack of critical appraisal. This robot which is best described as a machine with some face recognition capabilities and a rudimentary chatbot engine, is falsely described as semi-sentient by its maker. In a nation where women are treated as a second-class citizen, UAE granted this machine a citizenship, treating the female gendered machine better than its own female citizens. Similarly, neither the Ethiopian government nor the media attempted to pause and reflect on how the robot’s stay in Addis Ababa [12] should be covered. Instead the over-hype and deception were amplified as the robot was treated as some God-like entity.

Leading scholars of the field such as Mitchell [13] emphasise that, we are far from “superintelligence”. The current state of AI is marked by crucial limitations such as the lack of understanding of common-sense, which is a crucial element of human understanding. Similarly, Bigham [14] emphasises that in most of the discussion regarding “autonomous” systems (be it robots or speech recognition algorithms), a heavy load of the work is done by humans, often cheap labour – a fact that is put aside as it doesn’t bode well with the AI over-hype narrative.

Over-hype is not only a problem that portrays unrealistic image [15] of the filed, but also one that distracts attention away from the real danger of AI which is much more invisible, nuanced and gradual than “killer robots”. The simplification and extraction of human experience for capitalist [16] ends which is then presented as behaviour based “personalisation” is banal seeming practice on the surface but one that needs more attention and scrutiny. Similarly, algorithmic predictive models of behaviour that infer habits, behaviours and emotions need to be of concern as most of there inferences reflect strongly held biases and unfairness rather than getting at any in-depth causes or explanations.

The continent would do well to adopt a dose of critical appraisal when presenting, developing and reporting AI. This requires challenging the mindset that portrays AI with God-like power. And seeing AI as a tool that we create, control and are responsible for. Not as something that exists and develops independent of those that create it. And like any other tool, AI is one that embeds and reflects our inconsistencies, limitations, biases, political and emotional desires. Just like a mirror that reflects how society operates – unjust and prejudiced against some individuals and groups.

Technology is never either neutral or objective – it is like a mirror that reflects societal bias, unfairness and injustice

AI tools deployed in various spheres are often presented as objective and value free. In fact, some automated systems which are put forward in domains such as hiring [17] and policing [18] are put forward with the explicit claim that these tools eliminate human bias. Automated systems, after all, apply the same rules to everybody. Such claim is in fact one of the single most erroneous and harmful misconceptions as far as automated systems are concerned. As the Harvard mathematician, Cathy O’Neil [19] explains “algorithms are opinions embedded in code”. This widespread misconception further prevents individuals from asking questions and demanding explanations. How we see the world and how we chose to represent the world is reflected in the algorithmic models of the world that we build. The tools we build necessarily embed, reflect and perpetuate socially and culturally held stereotypes and unquestioned assumptions. Any classification, clustering or discrimination of human behaviours and characteristics that our AI system produces reflects socially and culturally held stereotypes, not an objective truth.

UN delegates working on online counterterrorism measures but explicitly focusing on Islamic groups despite over 60 percent [20] of mass shootings in 2019 the USA being carried out by white nationalist extremists, illustrate a worrying example that stereotypically held views drive what we perceive as a problem and furthermore the type of technology we develop.

A robust body of research as well as countless reports [21] of individual personal experience illustrates that various applications of algorithmic decision-makings result in biased and discriminatory outcomes. These discriminatory outcomes affect individuals and groups which are already on society’s margins, those that are viewed as deviants and outliers – people that refuse to conform to the status quo. Given that the most vulnerable are affected by technology the most, it is important that their voices are central in any design and implementation of any technology that is used on/around them. Their voice needs to be prioritised at every step of the way including in the designing, developing, implementing of any technology as well as in policy making.

As Africa grapples between catching up with the latest technological developments and protecting the consequential harm that technology causes, policy makers, governments and firms that develop and apply various tech to the social sphere need to think long and hard about what kind of society we want and what kind of society technology drives. Protecting and respecting the rights, freedoms and privacy of the very youth that the leaders want to put at the front and centre should be prioritised. This can only happen with guidelines and safeguards for individual rights and freedom in place.

Invasion of privacy and the erosion of humane treatment of the human

AI technologies are gradually being integrated to decision making processes in every sphere of life including insurance, banking, health and education services. Various start-ups are emerging from all corners of the continent at an exponential rate to develop the next “cutting edge” app, tool or system; to collect as much data as possible and then infer and deduce “users” various behaviours and habits. However, there seems to be little, if any at all, attention paid to the fact that digitisation and automatization of such spheres necessarily brings its own, often not immediately visible, problems. In the race to come up with the next new “nudge” [22] mechanism that could be used in insurance or banking, the competition for mining the most data seems the central agenda. These firms take it for granted that such “data”, which is out there for grabs, automatically belongs to them. The discourse around “data mining” and “data rich continent” shows the extent to which the individual behind each data point remains non-existent. This removing of the individual (individual with fears, emotions, dreams and hopes) behind each data is symptomatic of how little attention is given to privacy concerns. This discourse of “mining” people for data is reminiscent of the coloniser attitude that declares humans as raw material free for the taking.

Data is necessarily always about something and never about an abstract entity. The collection, analysis and manipulation of data, possibly entails monitoring, tracking and surveilling people. This necessarily impacts them directly or indirectly whether it is change of their insurance premiums or refusal of services.

AI technologies that are aiding decision making in the social sphere are developed and implemented by private sectors and various start-ups for the most part, whose primary aim is to maximise profit. Protecting individual privacy rights and cultivating a fair society is therefore least of their agenda especially if such practice gets in the way of “mining”, freely manipulating behaviour and pushing products into customers. This means that, as we hand over decision making regarding social issues to automated systems developed by profit driven corporates, not only are we allowing our social concerns to be dictated by corporate incentives (profit), but we are also handing over moral questions to the corporate world. “Digital nudges”, behaviour modifications developed to suit commercial interests, are a prime example. As “nudging” mechanisms become the norm for “correcting” individual’s behaviour, eating habits or exercising routines, those corporates, private sectors and engineers developing automated systems are bestowed with the power to decide what the “correct” behaviour, eating or exercising habit is. Questions such as who is deciding what the “correct” behaviour is and for what purpose are often completely ignored. In the process, individuals that do not fit our stereotypical image of what a “fit body”, a “well health” and a “good eating habit” is end up being punished, outcasted and pushed further to the margin.

The use of technology within the social sphere often, intentionally or accidentally, focuses on punitive practices, whether it is to predict who will commit the next crime or who would fail to pay their mortgage. Constructive and rehabilitation questions such as why people commit crimes in the first place or what can be done to rehabilitate and support those that have come out of prison are almost never asked. Technological developments built and applied with the aim of bringing security and order, necessarily bring cruel, discriminatory and inhumane practices to some. The cruel treatment of the Uighurs in China [23] and the unfair disadvantaging of the poor [24] are examples in this regard.

The question of technologization and digitalisation of the continent is also a question of what kind of society we want to live in. African youth solving their own problems means deciding what we want to amplify and show the rest of the world. It also means not importing the latest state-of-the-art machine learning systems or any other AI tools without questioning what the underlying purpose is, who benefits, and who might be disadvantaged by the application of such tools. Moreover, African youth playing in AI filed means creating programs and databases that serve various local communities and not blindly importing Western AI systems founded upon individualistic and capitalist drives. In a continent where much of the narrative is hindered by negative images such as migration, draught, and poverty; using AI to solve our problems ourselves means using AI in a way we want to understand who we are and how we want to be understood and perceived; a continent where community values triumph and nobody is left behind.

I gave a talk on the above title at

I gave a talk on the above title at  There is no area untouched by data science and computer science. From medicine, to the criminal justice system, to banking & insurance, to social welfare, data driven solutions and automated systems are proposed and developed for various social problems. The fact that computer science is intersecting with various social, cultural and political spheres means leaving the realm of the “purely technical” and dealing with human culture, values, meaning, and questions of morality; questions that need more than technical “solutions”, if they can be solved at all. Critical engagement and ethics are, therefore, imperative to the growing field of computer science.

There is no area untouched by data science and computer science. From medicine, to the criminal justice system, to banking & insurance, to social welfare, data driven solutions and automated systems are proposed and developed for various social problems. The fact that computer science is intersecting with various social, cultural and political spheres means leaving the realm of the “purely technical” and dealing with human culture, values, meaning, and questions of morality; questions that need more than technical “solutions”, if they can be solved at all. Critical engagement and ethics are, therefore, imperative to the growing field of computer science.

A guide to understanding the inner workings and outer limits of technology and why we should never assume that computers always get it right.

A guide to understanding the inner workings and outer limits of technology and why we should never assume that computers always get it right.

AI is often thought of as a recent development, or worse, as futuristic, something that will happen in the far future. We tend to forget that dreams, aspirations and fascinations with AI go back in history back to antiquity. In this regard, Rene Descartes’s

AI is often thought of as a recent development, or worse, as futuristic, something that will happen in the far future. We tend to forget that dreams, aspirations and fascinations with AI go back in history back to antiquity. In this regard, Rene Descartes’s  The ninth century Persian mathematician Muḥammad ibn Mūsā al-Khwārizmī who gave us one of the earliest mathematical algorithms. The word “algorithm” comes from mispronunciation of his name.

The ninth century Persian mathematician Muḥammad ibn Mūsā al-Khwārizmī who gave us one of the earliest mathematical algorithms. The word “algorithm” comes from mispronunciation of his name.